July 18, 2025

Benchmarking Translation Engines: A Comparative Study

Many translation engines offer varying levels of performance and quality. But which one should you choose?

MachineTranslation.com studied various top machine translators available on our AI-powered translation aggregator. We analyzed the leading engines based on key metrics to find the best balance of speed and accuracy.

The top machine translation engines we reviewed are DeepL, Google, Chat GPT, Microsoft, Lingvanex, Modern MT, Royalflush, Niutrans, and Groq.

Detailed Comparison of Top Translation Engines

Our AI-powered machine translation aggregator collected extensive data from user translations and interactions. With this data, we analyzed two key metrics: average translation scores and processing times.

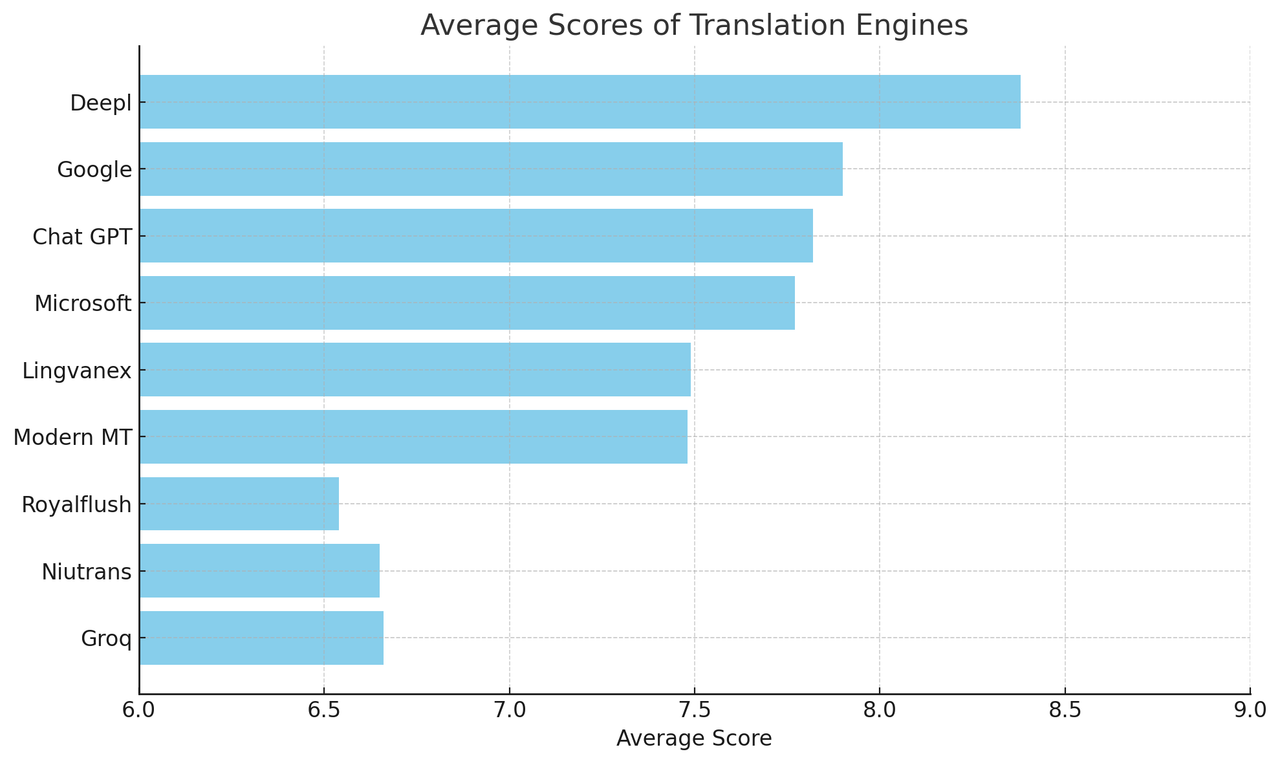

Average Scores of Translation Engines

The average score is a key indicator of the translation quality produced by each engine. Scores come from feedback on translated texts, rated on clarity, coherence, and the extent of required edits. The results presented in this article may vary and are subject to change based on ongoing feedback and research.

Here are the average scores for the leading translation engines:

-

DeepL: 8.38

-

Google: 7.90

-

Chat GPT: 7.82

-

Microsoft: 7.77

-

Lingvanex: 7.49

-

Modern MT: 7.48

-

Royalflush: 6.54

-

Niutrans: 6.65

-

Groq: 6.66

Average Scores of Translation Engines

This chart illustrates the average scores for each engine.

Based on the chart above, DeepL has the highest average score, showing superior translation quality among the eleven machine translators. Google and Chat GPT also perform well, followed closely by Microsoft.

Lingvanex and Modern MT are moderate performers, providing satisfactory quality but not as high as the top performers. Royalflush, Niutrans, and Groq have the lowest average scores, meaning their translations often need more edits.

Read more: Languages Supported by Popular Machine Translation Engines

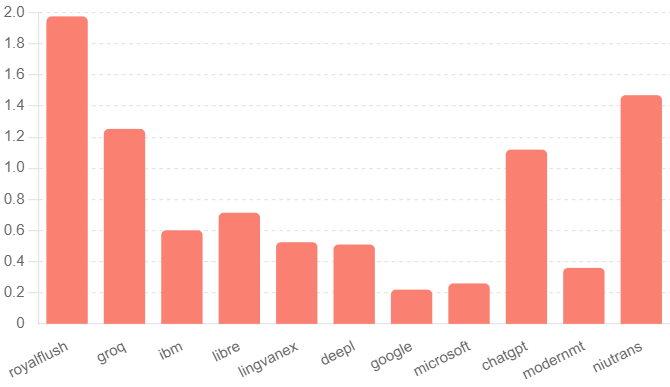

Processing Time of Different Engines

Processing time is a crucial metric reflecting a translation engine's efficiency. Faster processing times are essential for real-time translations. Here are the average processing times for each engine:

-

Google: 0.22 seconds

-

Microsoft: 0.26 seconds

-

Amazon: 0.33 seconds

-

Modern MT: 0.36 seconds

-

Lingvanex: 0.45 seconds

-

DeepL: 0.51 seconds

-

Chat GPT: 1.12 seconds

-

Niutrans: 1.47 seconds

-

Royalflush: 1.83 seconds

Processing Time of Different Engines

This chart showcases the average processing time of each machine translation engine.

From the chart above, Google, Microsoft, and Amazon are the fastest, making them ideal for quick translations. Modern MT, Lingvanex, and DeepL have moderate speeds.

ChatGPT, Niutrans, and Royalflush are the slowest, which can be a drawback in time-sensitive situations.

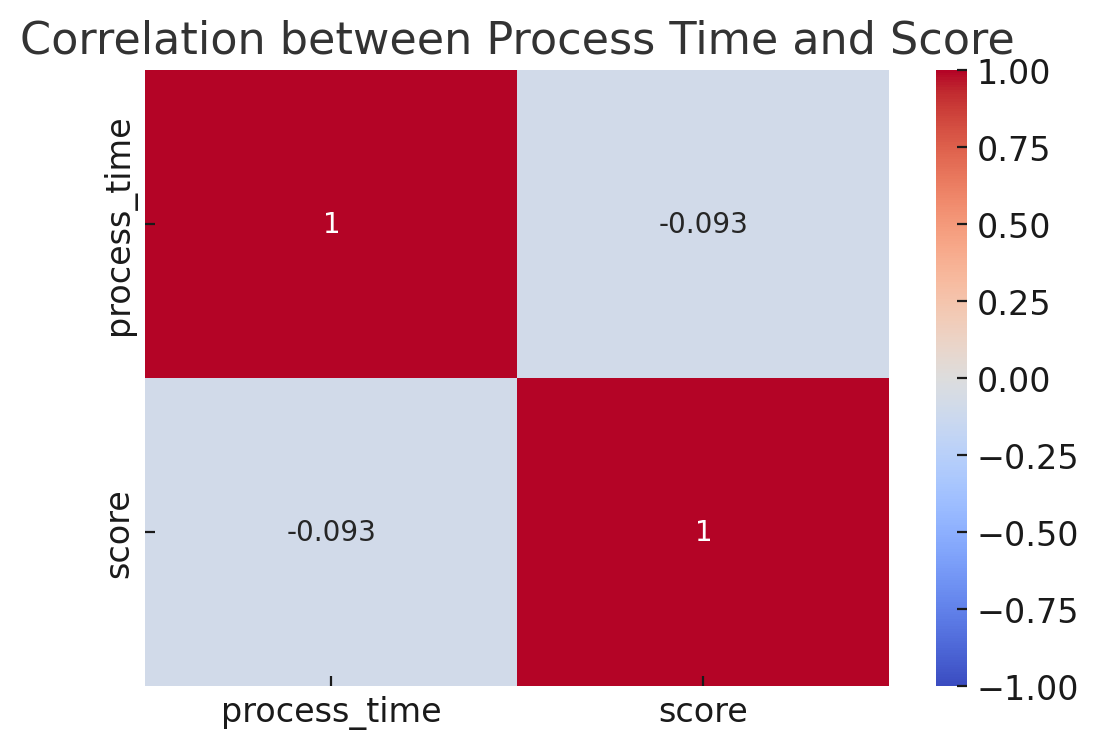

Correlation Between Process Time and Score

Heatmap Matrix

This chart shows no correlation between translation speed and quality.

To see if there's a relationship between processing time and translation quality, we analyzed the correlation between these two metrics. The chart above shows a correlation coefficient of about -0.093, indicating a very weak negative correlation. This means faster translation times don't necessarily affect the quality, and the two metrics are mostly independent.

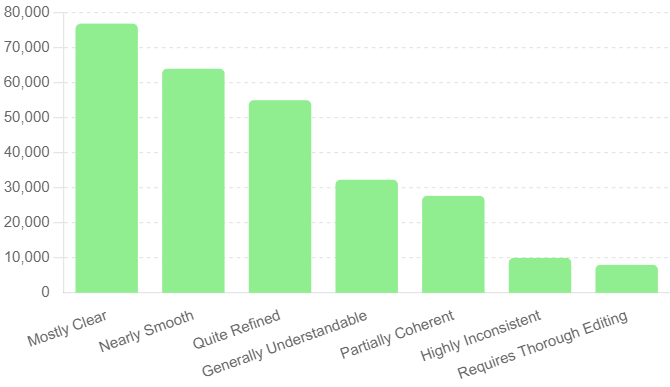

Insights into Feedback Analysis

Feedback offers valuable insights into translation quality. Here are the most common feedback types from MachineTranslation.com's aggregator and their frequency:

-

Mostly Clear: It only needed some revisions - 76,877 instances

-

Nearly Smooth: Optional tweaks are required - 64,001 instances

-

Quite Refined: May benefit from light edits - 55,030 instances

-

Highly Inconsistent: Requiring substantial edits - 32,301 instances

-

Requires Thorough Editing: It requires thorough editing - 27,697 instances

In addition to the common feedback types mentioned, we further analyzed to offer a more accurate representation of the translation quality from our AI-powered aggregator, as shown in the chart below.

Insights into Feedback Analysis

Our AI-powered translation aggregator produces "mostly clear" output based on its internal feedback analysis.

The chart above displays our AI-powered aggregator's internal feedback analysis for the translated content. The highest average scores are for "Outstandingly Clear", "Nearly Smooth", and "Quite Refined".

"Outstandingly Clear" has the highest average score, indicating minimal need for edits. "Nearly Smooth" and "Quite Refined" have a similar average score of around 7.5-8, suggesting good quality with minor improvements needed.

Meanwhile, the lowest scores are for "Highly Inconsistent" and "Requires Thorough Editing", with average scores below 5, indicating significant translation issues.

Read more: Best Machine Translation Engines Per Language Pair

Conclusion

Our study identifies the strengths and weaknesses of different translation engines. The findings on the machine translators in this article may change as we continue to research and develop our AI-powered aggregator.

These findings can help businesses and individuals choose the best translation engine based on their specific needs, whether emphasizing speed, quality, or a balance of both. If you want to try the machine translation engines mentioned in this article, visit our homepage. You can also sign up for our free subscription plan, which gives you 1500 credits monthly for more access.